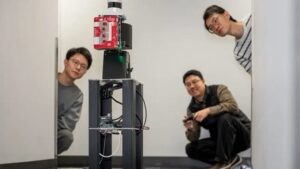

A close up look at the HoloRadar attached to a mobile robot that was tested indoors at the University of Pennsylvania. Future testing will include outdoor intersection and beyond. Source: Sylvia Zhang/University of Pennsylvania

A new type of industrial imaging is emerging that uses radio waves, processed by artificial intelligence (AI), to allow machines to see around corners and anticipate issues that are not directly in their line-of-sight.

The imaging technology could be a boon for safety and performance in autonomous vehicles, robotics and industrial asset tracking for warehouses and factories.

The University of Pennsylvania recently developed a new system, called HoloRadar, that allows robots to reconstruct 3D scenes outside of their direct sight and around corners. This new type of perception works in the darkness as well as in variable light conditions.

“Robots and autonomous vehicles need to see beyond what’s directly in front of them,” said Mingmin Zhao, assistant professor in Computer and Information Science (CIS) at Penn Engineering. “This capability is essential to help robots and autonomous vehicles make safer decisions in real time.”

How it works

Compared to visible light, radio signals have longer wavelengths. While this has previously been seen as a disadvantage from imaging due to issues with resolution, Penn Engineering found that these longer wavelengths are an advantage when peering around corners.

“Because radio waves are so much larger than the tiny surface variations in walls, those surfaces effectively become mirrors that reflect radio signals in predictable ways,” said Haowen Lai, a doctoral student in CIS and participant in the project.

Meaning: Flat surfaces — walls, floors, ceilings — bounce radio signals around corners about the hidden spaces and then back to the robots. HoloRadar then captures the reflections and reconstructs it for the robot to know what lies beyond its direct sight.

“It’s similar to how human drivers sometimes rely on mirrors stationed at blind intersections,” Lai said. “Because HoloRadar uses radio waves, the environment itself becomes full of mirrors, without actually having to change the environment.”

AI’s role

Penn Engineering created a custom AI system that combined machine learning with physics-based modeling. The system enhances the resolution of raw radio wave signals and identifies multiple returns that correspond to different reflection paths.

Then, the physics-guided model traces those reflections backward and reconstructs it to a 3D scene.

“In some sense, the challenge is similar to walking into a room full of mirrors,” said Zitong Lan, a doctoral student in Electrical and Systems Engineering (ESE) and researcher on the project. “You see many copies of the same object reflected in different places, and the hard part is figuring out where things really are. Our system learns how to reverse that process in a physics-grounded way.”

Researchers said modeling how radio waves bounce off surfaces allows the AI to distinguish between direct and indirect reflections. This allows it to determine the right physical location of objects and people.

Engineers from the University of Pennsylvania test an imaging technology called HoloRadar on robotics to allow it to see hidden objects and pedestrians outside of direct line of sight. Source: Sylvia Zhang/University of Pennsylvania

Potential use cases

According to Penn Engineering researchers, HoloRadar would help with safety systems for autonomous robots as a complement to other sensors rather than replacing them.

For example, in autonomous vehicles lidar does the heavy lifting for the sensing system using lasers to detect objects around a car’s environment and then relay it back to the vehicle. HoloRadar would add an additional layer of perception by revealing objects around corners giving the vehicle more time to react to potential problems.

“HoloRadar is designed to work in the kinds of environments robots actually operate in,” Zhao said. “This system is mobile, runs in real time and doesn’t depend on controlled lighting.”

In a cramped logistics environment where drones or autonomous machines use sensors to navigate through rows and identify where an object resides, HoloRadar could provide the robot with an additional view of objects coming around corners to avoid potential safety issues.

Researchers tested HoloRadar on a mobile robot in an indoor environment in a hallway and around building corners. The system was able to reconstruct walls, corridors and hidden human subjects located behind the robot’s line of sight.

Future testing will include outdoor scenarios involving intersections and urban streets including longer distances and different types of environmental conditions to provide an additional challenge.

“This is an important step toward giving robots a more complete understanding of their surroundings,” Zhao said. “Our long-term goal is to enable machines to operate safely and intelligently in the dynamic and complex environments humans navigate every day.”

Others developing similar tech

Not surprisingly, several vendors are developing industrial imaging technology for robotics and vehicles that is like the Penn Engineering system.

Probably the most similar is that of Israeli tech firm Arbe Robotics, combing radar sensing with AI-based post-processing. The goal is to improve automotive perception that results in fewer false alarms to improve safety in either advanced driver assistance systems (ADAS) or human-driven and autonomous vehicles.

Arbe built 4D imaging radar chipsets that produce high-solution images of surrounding environments, particularly in poor visibility. Or reconstruction of hidden scenes using RF reflections.

Another company developing 3D imaging radar-on-chip sensors is Vayyar Imaging. Originally developed for medical imaging, the company has pivoted to robotics, touchless fall detection and automotive sensors for ADAS like:

- Collision warnings

- Parking assistance

- Cross-traffic alerts

- Blind spot detection

- Autonomous emergency braking

- Reverse monitoring

- Adaptive cruise control

For additional information about the research, visit the University of Pennsylvania at: https://waves.seas.upenn.edu/projects/holoradar/

Author: Peter Brown, Electronics 360, GlobalSpec